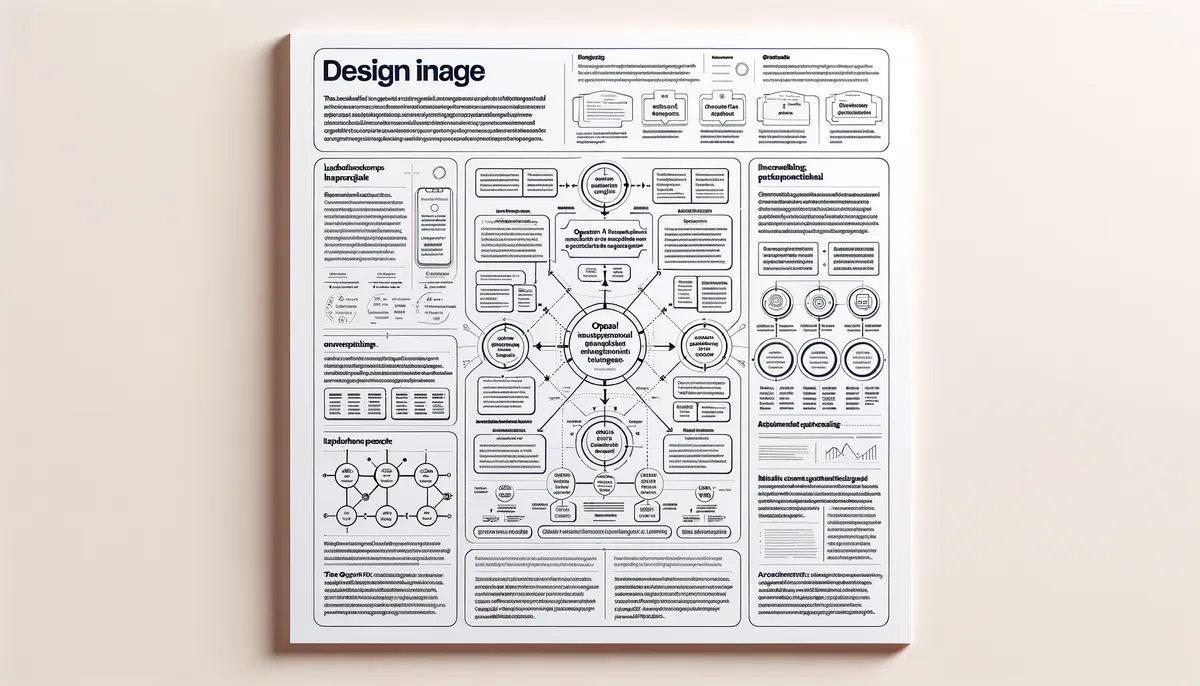

Understanding OpenAI's Model Spec Framework

OpenAI has recently unveiled its Model Spec framework, an important step toward enhancing AI model behavior and ensuring alignment with safety standards. This public framework aims to provide transparency and guidance on how AI systems should operate, addressing the concerns that arise as these technologies become more integrated into our daily lives. The Model Spec seeks to create a balanced approach that prioritizes AI safety while considering user freedom and accountability.

This initiative responds to the increasing complexities associated with AI advancements. As models grow more sophisticated, the potential for misuse and unintended consequences also rises. OpenAI's Model Spec serves as a structured guideline for AI researchers, developers, and policymakers, promoting a clear understanding of expected model behaviors and the ethical implications that come with them.

The Role of AI Safety in Model Behavior

AI safety is a cornerstone of the Model Spec framework. OpenAI acknowledges that as AI systems’ capabilities expand, the need for robust safety measures becomes even more critical. The framework emphasizes the importance of designing models that not only perform their intended tasks effectively but do so without causing harm or perpetuating biases.

Insights from OpenAI’s approach highlight that the Model Spec outlines specific criteria for evaluating model behavior, which include rigorous testing and validation processes. These criteria ensure that AI systems function safely across various contexts, minimizing risks associated with automation and machine learning. By prioritizing safety, OpenAI aims to foster public trust in AI technologies, ultimately contributing to their broader acceptance and integration.

User Freedom vs. Accountability in AI

The balance between user freedom and accountability stands as a central theme in discussions surrounding the Model Spec framework. Users must have the freedom to utilize AI tools in ways that serve their needs and aspirations, but there is also a pressing need for accountability mechanisms that prevent misuse and hold developers responsible for the consequences of their models.

OpenAI's Model Spec strives to navigate this complex landscape by establishing clear guidelines that promote responsible use while safeguarding user autonomy. This dual focus is crucial for fostering an environment where innovation can flourish without compromising ethical standards. For instance, the framework encourages developers to implement features that allow for user customization and flexibility, all while embedding checks and balances that ensure accountability.

Future Implications for AI Development

The introduction of the Model Spec framework is likely to have significant implications for the future of AI development. By setting a public standard for model behavior, OpenAI is not only driving the conversation about ethical AI but also influencing the trajectory of research and innovation in the field. This framework could pave the way for regulatory measures that align with these standards, creating a more cohesive approach to AI governance.

As more organizations adopt similar frameworks, we may observe a shift in how AI models are developed and deployed. The expectation for transparency and adherence to defined behavioral standards could lead to more robust models that prioritize ethical considerations alongside technical performance. This evolution may encourage collaboration among stakeholders, including researchers, policymakers, and industry leaders, fostering a unified approach to AI development.

Challenges and Opportunities in AI Regulation

While the Model Spec framework represents a proactive step in addressing AI safety and accountability, it also underscores the challenges of regulating rapidly evolving technologies. One primary obstacle is the dynamic nature of AI itself; as models and their applications continue to advance, regulatory frameworks must be adaptable to keep pace.

Additionally, ensuring that regulations do not stifle innovation is a significant challenge. Striking the right balance will require ongoing dialogue among stakeholders to fully understand the implications of AI technologies. OpenAI’s Model Spec provides a foundation for this conversation by offering a structured approach that can be referenced and built upon in regulatory discussions.

Moreover, the framework presents opportunities for international collaboration. As AI technologies transcend borders, establishing common standards through initiatives like the Model Spec can promote global cooperation in addressing the ethical challenges posed by AI.

OpenAI's Model Spec framework represents a pivotal development in the pursuit of AI safety, user freedom, and accountability. It sets a precedent for how AI models should be designed and evaluated, balancing innovation with ethical considerations. As AI continues to evolve, the principles outlined in the Model Spec will likely serve as a guiding light for developers, researchers, and policymakers alike, shaping the future of artificial intelligence in a responsible and sustainable manner.

Why This Matters

This development signals a broader shift in the AI industry that could reshape how businesses and consumers interact with technology. Stay informed to understand how these changes might affect your work or interests.